Let me understand this better:

- Is the CDN caching requests of the main domain? (HTML requests)

- Or is the CDN only used for static assets in a different domain?

Apart from that, does your CDN honor the cache-control headers?

These are the cache-control headers we recommend for Frontity: https://github.com/frontity/now-builder/blob/master/src/index.ts#L116-L128

[

// HTML.

{

src: `/.*`,

headers: { "cache-control": "s-maxage=1,stale-while-revalidate" },

dest: `/server.js`

},

// Static assets.

{

src: `/static/(.*)`,

headers: { "cache-control": "public,max-age=31536000,immutable" },

dest: `/static/$1`

}

]

We recommend stale-while-revalidate for the HTML for small sites because that way it always serves the cached response, but it’s not important for big sites because 99.9% of the visits will get a cached response.

s-maxage is 1 because is a safe default, but of course this should be configured by the site owners, depending on their needs.

For the static folder, we just set it as immutable because all the files include a unique hash.

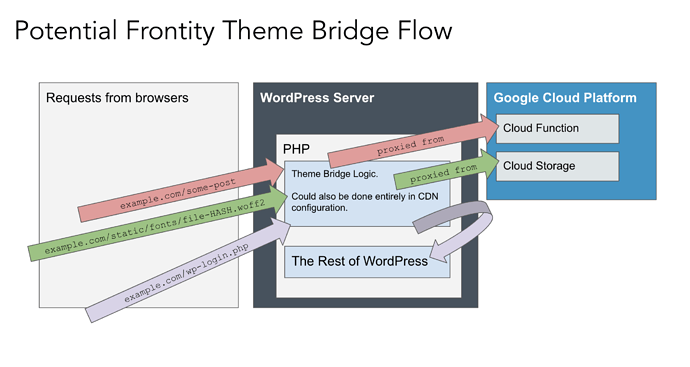

I don’t quite understand this. Point 5 does precisely that: it puts everything under the same domain. Remember that when the PHP Theme Bridge is in charge, it does an internal HTTP request and returns the response, so everything that goes through it will use the WordPress domain.

Maybe we need some diagrams with all the scenarios to make sure we’re talking about the same cases

A Frontity server should always remain quite small.

The current server size is 1Mb, including React, Koa, the Frontity core and the rest of the libraries required. I guess the most complex app possible shouldn’t get bigger than 3Mb.

The client assets are a bit smaller, 600Kbs. If we embed them in the server, it’d be 1.6Mb. I guess a very complex app with client assets embedded could be 5Mb, which is still a tolerable size for serverless.

Adding fonts or an image for the logo is fine. But to store images included in the WP content, they should use their WordPress site, not Frontity.

But they shouldn’t doesn’t mean they won’t… Maybe, if people start doing it we will need to teach them that they should use WP for that.

Is option 3 using the publicPath option or doing the routing with your own proxying logic?

this is what I had in mind for point 3, and I think it is also might be what you were imagining for point 5.

this is what I had in mind for point 3, and I think it is also might be what you were imagining for point 5.